Gesture recognition using top-view depth data

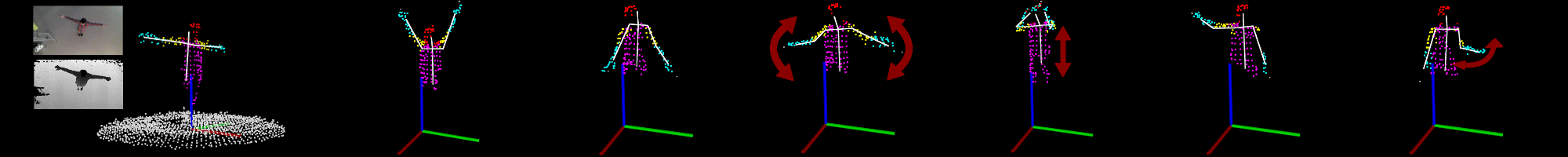

The visual tracking of human body and gesture recognition represent a key technology in a number of areas such as video surveillance, security applications, entertainment industry or natural human-machine interaction. With the advent of depth sensors capable of 3D scene reconstruction the reliability of human tracking solutions increased. Most of the current approaches rely on the side view mounted sensors, i.e. a standing person must directly face the sensor, however certain applications might require the sensor to be placed away from the scene which the user moves within. For one of our partners we developed and implemented a system suitable for real-time human tracking and predefined human gestures recognition using depth data acquired from Microsoft Kinect sensor installed right above the detection region.

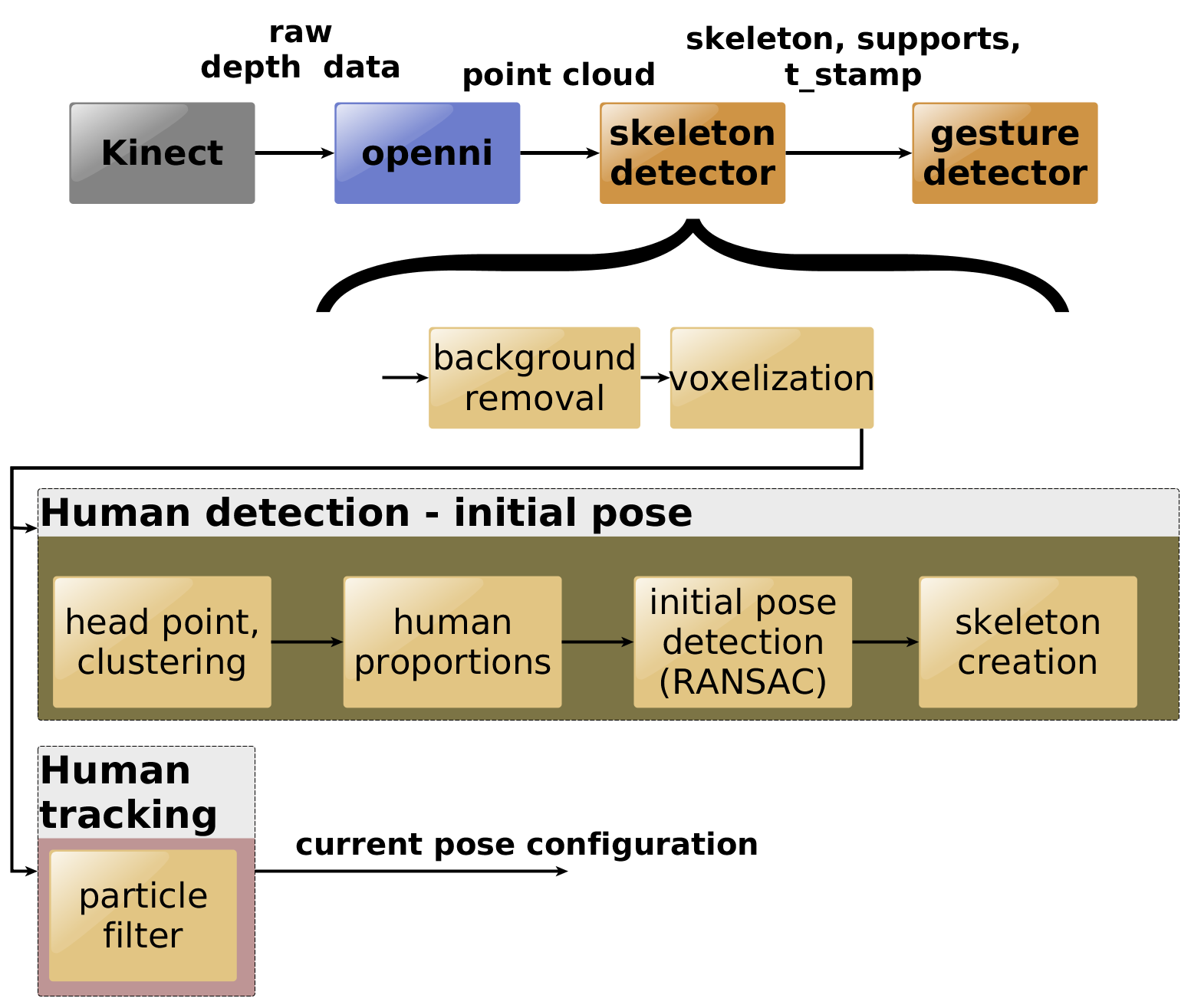

System overview

The system is capable of reliable detection and tracking of a person and it supports recognition of predefined gestures. No prior knowledge about a person to be tracked is required as the system derives all the necessary information autonomously during the human detection phase. Furthermore even crowded environments pose no limitations to the system’s performance as the selective tracking of formerly detected person is implemented. Thorough tests incorporating multiple subjects with different body heights and shapes were performed. High precision was achieved exceeding 92 % and thanks to the optimized C++ implementation together with GPU acceleration using CUDA framework the system runs in real-time (reaching more then 30 FPS). These results make the system perfectly suitable for the real time applications.

Hardware

The data is obtained from the Microsoft Kinect for Xbox 360 sensor mounted in the height of 3.8 – 5.5 m above the ground. Acquired images are processed on a high performance PC with the following hardware parameters: CPU Intel Core i5 4590 @ 3.3 Ghz x 4, GPU Nvidia GeForce GTX 760, 4GB RAM.

Software

The system is based on the optimized C++ implementation utilizing ROS (Robot Operating System) framework where the most demanding part of the system were accelerated on GPU using CUDA framework.

Video

Applications

The system is suitable for the applications where the sensor unobtrusiveness as well as the high precision and reliability is required. As an example the software is suitable for the seamless human-computer interaction implementations, entertainment solutions, security applications etc. As for the existing deployments our system was accepted as the entertainment solution enabling a user to control a glass kinetic installation which was exhibited by the Czech Republic based luxury glass installations and lighting collections manufacturer Lasvit on this year’s Euroluce 2015 exhibition held in Milan in April 2015.

Features

Awards

The system was presented at a conference Excel@FIT 2015 – Student competition conference of innovations, technology and science in IT which was held by the Faculty of Information Technology, Brno University of Technology on 30th April 2015. The authors were awarded the 1st prize for the excellent idea, the 2nd prize for the innovation potential, the 3rd prize for the business potential and the 4th prize for the social contribution.